Palimpsest

With graphics: https://www.ashray.sh/blog/palimpsest

Brute force has a certain moral cleanliness to it. As long as you’re searching hard enough, thinking long enough, enumerating carefully enough, the optimum has to be in there somewhere. It suggests that the world is legible so long as you are willing to suffer enough.

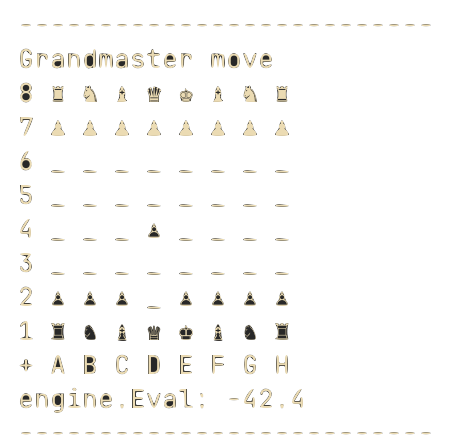

I wrote a chess bot in the seventh grade following this theory, coded over a weekend after losing a gamepigeon challenge in class. It was the pinnacle of innovation as far as I was concerned: every legal move, every response, every response to the response, six ply deep in messy single-threaded java. It considered more positions than any kid in the room could hold in their head. Then it would walk its pawn into a structure some 10-year-old on Chess.com would instinctively avoid.

Better engines written with intention know better. Alpha-beta pruning lets them skip branches that can’t possibly win; modern engines use heuristics to throw away and deprioritize entire lines that look bad before proving they are. The more you know about which branches to cut, the deeper you can search with the same compute. This seems true well beyond chess. Intelligence, at least in practice, seems to have a great deal to do with pruning some possibility-space. We call much of this intuition.

This turns out to be largely the result of a compiled binary (well a quaternary) that set the priors before we were even born. Some of them are ancient: Threat detection on a savannah I’ve never seen, pattern-matching for fruit I’ll never forage for, social priors calibrated for a tribe of a hundred and fifty1. Others are newer: childhood habits of attention, the kinds of competence that got rewarded early enough. You’d have to take Ghidra2 to the head before effectively deciphering any of it, and unfortunately, we are still mostly working on zebrafish and fly brains.

The trouble is that this stuff does not arrive as an argument. Phenomenologically, it feels like me. By the time a conviction reaches consciousness, the upstream mixture has already been flattened into the latent experience of obviousness. No wonder introspection feels so slippery.

There is a very curious phenomenon observed in split-brain patients where the left hemisphere will instantly fabricate a rationalization for why the body is doing something, even when it literally cannot know. The brain would rather lie than admit it’s guessing.

A lot of these priors are badly distribution-shifted. Loss aversion made excellent sense on a caloric knife-edge instead of a world of Walmarts. These same heuristics misfire strangely in modernity. Our evaluators were made for the Rift Valley and then dropped into dealing with push notifications, perpetual futures, and building cuda sm120 from source.

The machine learning version of this is approximately pretraining. We love priors because the world is too large and too expensive to learn from scratch. Good initializations make search survivable. But with enough task-specific data, starting from scratch can outperform the warm start. There may be a human version of this too. What we inherit is indispensable because it makes contact with the world tractable in the first place. But under enough sustained exposure to reality, some of those same priors were pointing toward a local minimum. There’s a reason Stockfish’s alien sacrifices didn’t come from respecting common sense.

This is why the brain feels so embarrassingly hackable. We talk about neurotech as though it begins with electrodes and ultrasound, but much of modern life is already inapparent neurotech: music, fast food, the endless scrolling feed tuned by armies of gradient descenders. And frankly, these are often vastly more powerful than a physical neural interface. They don’t bother trying to out-argue your reasoning. Instead, they exploit the pruning itself. It is essentially a speculative execution attack on the human nervous system: abusing the exact predictive branches our biology left unguarded for the sake of speed.

It’s tempting to just treat our hunches as unimpeachable, and terribly dissonant to admit that they’re a series of lossy distillations. What are we then if not transplants from a different era trying to assimilate?

The task, then, is to not let nepotism, fear and convenience qualify the intuitions you hold. This is probably why strong new ideas so often feel a little stupid at first. So do strong people, sometimes. The strangeness of evidence shouldn’t be the evidence of strangeness3. If we are going to walk a pawn into a weird structure, we should do it when we see the line and not because we’re out of time. α

Thanks to divij & warren for feedback on drafts

Dunbar's number